Amazon’s New AI Tool for Faster Vertical Video Creation Welcomes Fox and NBCUniversal as Early Adopters

Amazon’s AWS is debuting a new AI-driven product designed to assist broadcasters in adapting to the evolving demands of social media.

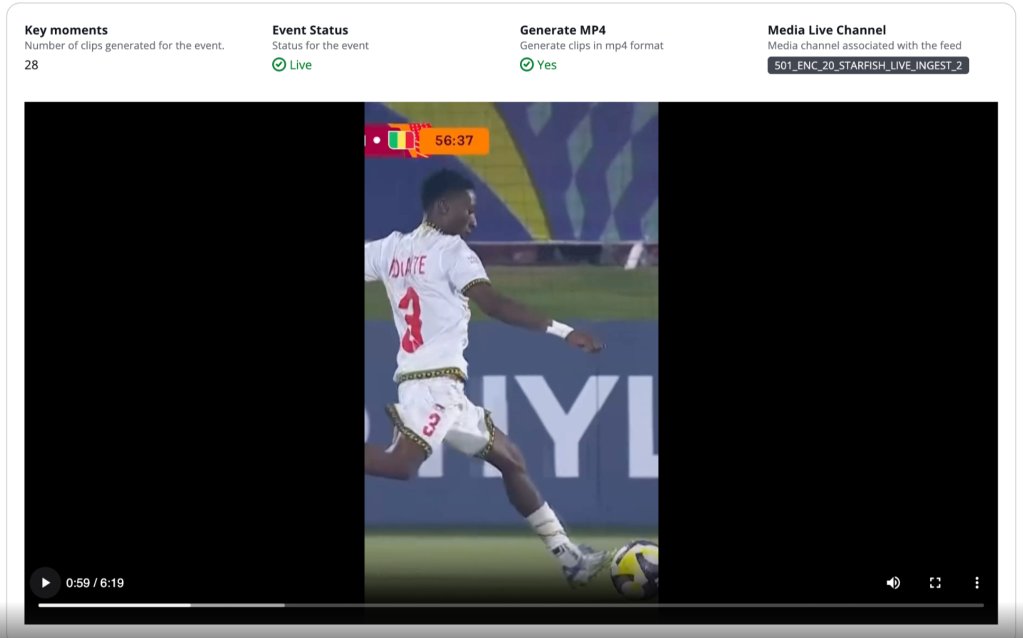

AWS Elemental Inference allows for the conversion of both live and on-demand footage into vertical videos specifically optimized for mobile and social media platforms.

With an increasing emphasis on vertical video formats for sports and other live events, networks and streaming services are focusing on producing content designed for mobile viewing. Successful vertical videos can gain traction on platforms such as TikTok, Instagram Reels, and YouTube Shorts, driving engagement and attracting younger audiences. However, the traditional process has often been labor-intensive, requiring manual edits to render footage captured by the main crew into mobile-friendly formats.

AWS claims its new offering employs a “process once, optimize everywhere” approach, achieving latency times of 6 to 10 seconds, compared to up to a minute with competing tools.

Watch on Deadline

The effectiveness of vertical videos was highlighted during the Winter Olympics, according to a spokesperson who noted that traditional methods frequently leave little window for engagement. “Minutes and hours can go by and then the moment’s gone,” she remarked, emphasizing that the new product allows broadcasters and streamers to take a more proactive role in capturing viral and timely moments.

Ricardo Perez-Selsky, senior director of digital production operations at Fox Sports, shared that the AWS tools have significantly reduced turnaround times, cutting the process from 45 minutes to an hour down to less than 15 minutes. “And that’s with a plussed-up storytelling aspect,” he said in an interview, adding that it automates a previously tedious task of converting 16×9 video into 9×16 formats.

The service utilizes an autonomous AI application that analyzes video in real time, automatically applying the required optimizations. AWS stated that it can detect vertical video cropping and clip generation autonomously, conducting multiple transformations without human intervention.

Perez-Selsky explained that the goal in creating vertical clips is to ensure the final product does not overwhelm the audience. “This is now multiplied with vertical video because the action is moving that much faster because you have less space to actually capture the action,” he noted, emphasizing the need for the model to emulate human-like camera movements effectively.